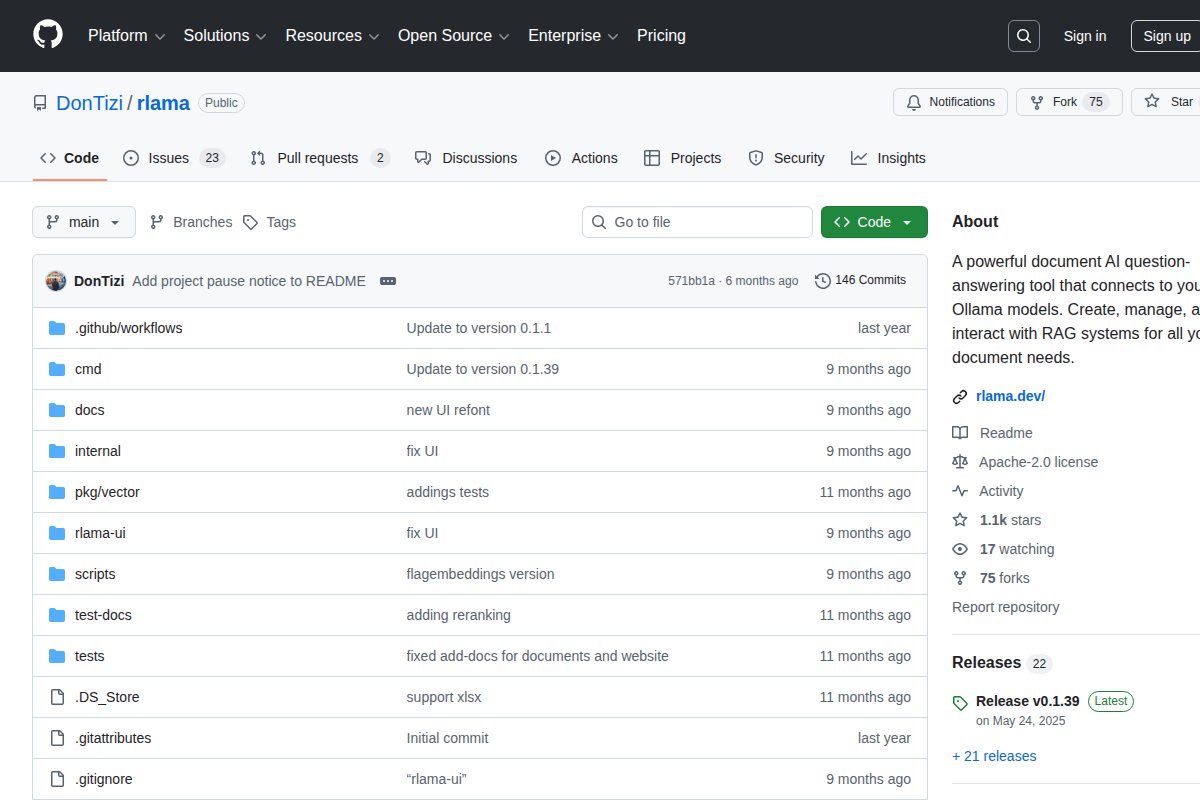

RLAMA

Open-source RAG CLI for Ollama - chat with your local documents

About RLAMA

Open-source RAG CLI for Ollama - chat with your local documents.

RLAMA works 100% offline, is open source, is completely free to use, runs on CPU without a dedicated GPU.

Platform Support

Available for: Windows, macOS, Linux

System Requirements

- Minimum RAM: 8 GB

- GPU: Not required — runs on CPU

Links

Official Website · GitHub Repository

Full description coming soon. Check the official website or GitHub for more details.

Frequently Asked Questions

What is RLAMA?

Open-source RAG CLI for Ollama - chat with your local documents ## About RLAMA Open-source RAG CLI for Ollama - chat with your local documents. RLAMA works 100% offline, is open source, is completely free to use, runs on CPU without a dedicated GPU. ### Platfor...

Is RLAMA free?

Yes, RLAMA is completely free to use. It's also open source.

Does RLAMA work offline?

Yes, RLAMA works 100% offline once installed.

What platforms does RLAMA support?

RLAMA is available for Windows, macOS, Linux.

Related Tools

View all →

Ollama

Run large language models locally with a simple CLI interface

LM Studio

Discover, download, and run local LLMs with an easy-to-use desktop app

Jan

Open-source ChatGPT alternative that runs 100% offline on your computer

GPT4All

Free-to-use, locally running, privacy-aware chatbot by Nomic AI

Text Generation WebUI

The AUTOMATIC1111 of text generation - maximum control for LLMs

KoboldCpp

Easy-to-use AI text generation software for GGML/GGUF models